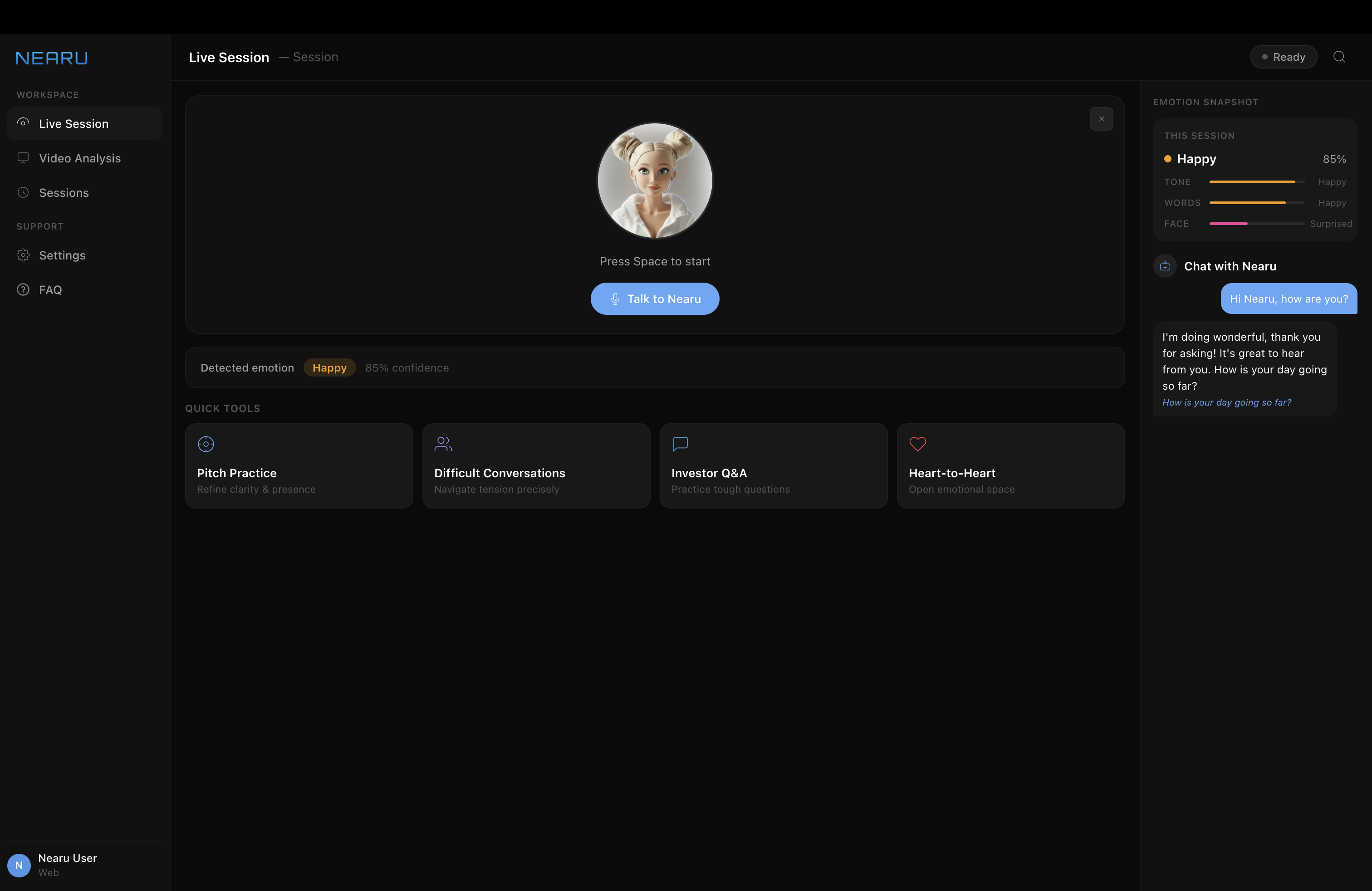

Employee Experience

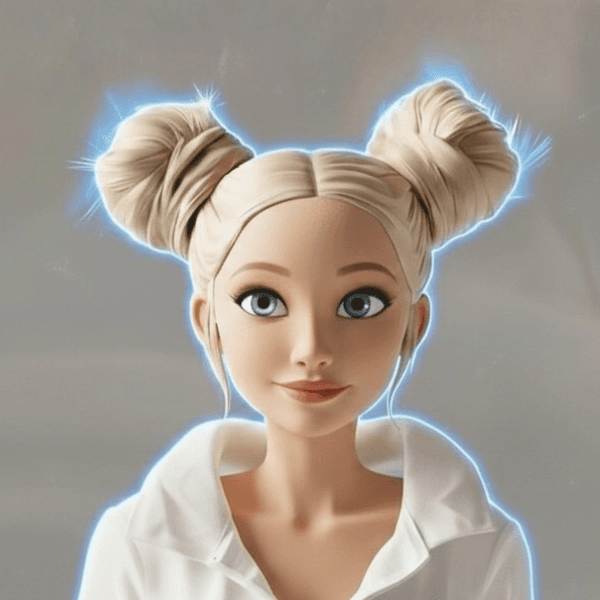

Avatar guides new hires from day one — contextually aware, emotionally present. Help arrives before the question is asked.

Emotional Intelligence Infrastructure for Embodied AI

AI models provide intelligence. Nearu provides presence, empathy, and trust — the layer that turns AI agents into entities people actually bond with.

Click play to hear Nearu speak

The robotics and AI industry is building brilliance. Nobody is building the emotional layer that makes it human.

Today's AI assistants can answer any question — but they can't read the room. They miss tone, emotion, and human context entirely.

Every session resets to zero. No memory of who you are, how you felt, or what matters to you. Engagement drops. Trust never forms.

LLMs provide raw intelligence. Hardware provides presence. But the emotional intelligence layer — empathy, personality, expression — doesn't exist yet.

Nearu provides the emotional intelligence infrastructure layer that sits between humans and any intelligent system — giving AI agents the ability to recognize emotion, respond with empathy, develop unique personality over time, and build persistent, evolving relationships with the user.

The "limbic system" of the machine. NearuVibe™ intercepts audio and video signals before the LLM — reading human emotion in real time and converting it into an EQ signal that shapes how the agent responds.

Prosody — tone, pitch, volume, pace, tremor

Nearu treats the LLM as a swappable brain. Persona, memory, and emotional layer survive model swaps — no rewiring required.

A complete emotional intelligence layer — from real-time conversation to deep video analysis. Built for developers, designed for humans.

As you speak, NearuVibe™ analyzes your voice, words, and expressions simultaneously. Three independent channels. One interpreted emotional state. Nearu responds with genuine empathy — not scripted replies.

Same user. Same words. Two completely different experiences. Press Play to see it unfold.

Avatar guides new hires from day one — contextually aware, emotionally present. Help arrives before the question is asked.

Reads frustration and confidence signals — not just clicks — to adapt instruction in the moment. A trainer that feels when you're lost.

Maintains active social presence during processing. Fills the silence. Higher CSAT, fewer escalations, deeper trust.

EQ layer for remote diagnostic agents — detecting emotional distress and escalating to human care when needed.

Reads buying intent and mirrors the emotional register of a skilled sales rep. Visitors engage longer because they feel genuinely met.

Persistent memory and evolving emotional model. The relationship compounds — building familiarity and trust that drives category loyalty.

Emotion-aware voice AI for the cabin — detects driver stress, fatigue, or frustration and adapts suggestions. Keeps interactions calm and focused for safer driving.

The evolution of AI isn't just technical — it's relational. Every generation has moved closer to something people can actually bond with.

The shift from functional AI to relational AI is the defining product transition of this decade. Empathy is becoming the primary differentiator for user retention in AI.

Emotion recognition across multiple channels that processes signals in real time.

Works with any LLM — GPT, Claude, Gemini, local. Persona and emotional layer survive model swaps.

Identity and relationship history that carries across sessions. No more reset to zero.

Voice prosody — pitch, pace, tremor — so the system hears how you feel, not just what you say.

Acoustic, semantic, and facial emotion fused with confidence weighting for higher accuracy.

Clean API response with labels, confidence scores, trend, and evidence summary for every call.

THE TEAM

We're looking for design partners, robotics OEMs, and investors who believe the next frontier of AI is relational.